PacketRadio.ca

Packet Radio Legacy

APRS, BBS, AXIP, NET/ROM — A Plain Explanation

What APRS, BBS forwarding, AXIP tunneling, and NET/ROM routing actually did on the AX.25 packet network, written for readers who want the function rather than the jargon.

Four acronyms came up constantly in the SOPRA network news, and they will come up constantly in any old packet documentation you read: APRS, BBS, AXIP, NET/ROM. They are not the same kind of thing. APRS and BBS are services that a user touched. AXIP and NET/ROM are infrastructure that the user did not touch but that made the services work. This page explains each in the order that makes them easiest to understand, with the minimum jargon required to be honest.

BBS — the message store

A packet BBS, or bulletin board system, was a station that stayed on the air around the clock and accepted connections from other amateurs. When you connected, you got a prompt. From the prompt you could list messages addressed to your callsign, read them, send new ones, post bulletins to topical categories, and download files. The interface was text. The commands were short — L to list, R 12345 to read message 12345, S VE3ABC to send to a callsign.

What made the BBS network into a network, rather than a set of isolated message stores, was forwarding. A BBS in Toronto knew about a BBS in Hamilton, knew about a BBS in Oshawa, knew about a BBS in Nova Scotia. When you posted a message to a callsign that was not local, the BBS would, on a schedule, hand the message off to whichever neighbour was closest in the routing sense to the destination. That neighbour would do the same. Eventually the message landed at a BBS where the destination callsign had a mailbox, and would sit there until the destination operator next connected.

The closest modern analogy is store-and-forward email from before always-on connectivity. The closest historical analogy is the postal service, run by volunteers, with the route negotiated between station operators. In the SOPRA footprint the central BBS sites were VE3CON in Toronto / Weston and VE3INF in Acton — both detailed in the node archive.

APRS — position and messaging in the open

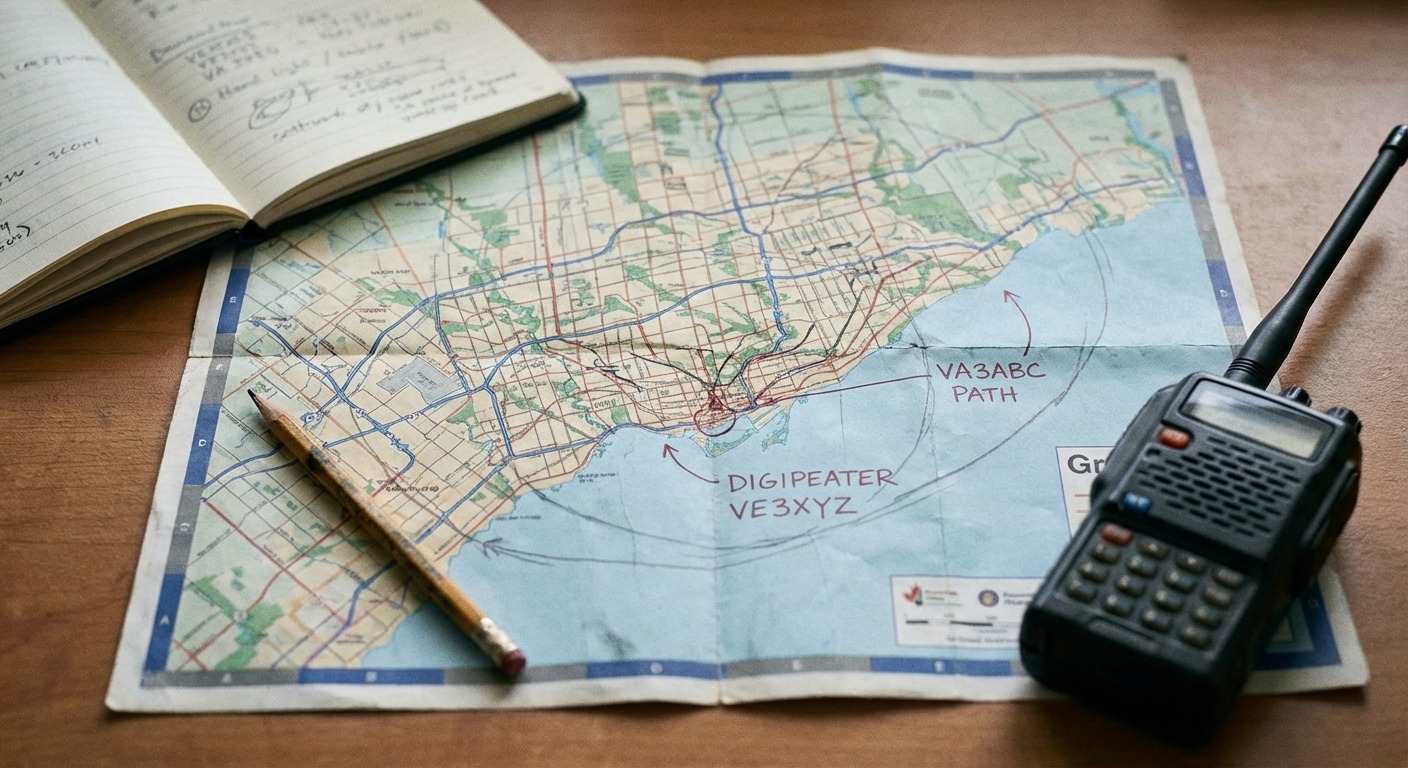

APRS — the Automatic Packet Reporting System — was Bob Bruninga's idea, and it took the AX.25 frame and used it differently. Instead of point-to-point connections between two callsigns, APRS frames were broadcast: every station in range received them, and digipeaters re-transmitted them out to a wider area according to a hop-count path. The most common APRS frame contained a station's GPS position, a symbol code that said what kind of station it was, and an optional comment field.

- Position beacon

- The basic APRS transmission. A latitude, a longitude, an altitude, a speed and course if moving, and a symbol. Sent every few minutes from a mobile, or once and then occasionally from a fixed station.

- WIDE digipeating

- A path convention — usually

WIDE1-1,WIDE2-2— that told digipeaters how many further hops the frame should take. Network discipline around path length is the difference between an APRS network that works and one that floods itself. - I-gate

- A station that bridged RF APRS traffic to the internet APRS-IS backbone, so that a position beacon transmitted on 144.39 MHz in Acton could be visible on a website to anyone in the world a few seconds later.

- Messaging

- APRS also carried short text messages between callsigns, with acknowledgement. It was less private than a BBS connect — every station in earshot heard it — but it was real-time.

In Canada APRS lives on 144.39 MHz, the North American standard frequency. In the SOPRA footprint VE3YAP in Acton ran APRS, sharing antenna with the VE3INF BBS via a re-tuned duplexer — a small detail that tells you a lot about how packet sites were actually operated. APRS is also where the bridge between amateur packet and modern web infrastructure is most obvious: aprs.fi is, functionally, a web frontend on a network that still runs largely over VHF.

NET/ROM — the routing layer

AX.25 by itself does not really know about routing. It knows about a source callsign, a destination callsign, and a path of digipeaters that you specify by hand. That works fine when you know exactly which digipeaters to use. It does not work for a user who just wants to type C VE3ABC and have the network figure out how to get there.

NET/ROM solved that. A NET/ROM node was a packet station that ran routing software (originally the proprietary NET/ROM firmware, later open-source equivalents like G8BPQ's BPQ32 and the routing layer in JNOS). NET/ROM nodes exchanged routing tables with their neighbours, the same way an internet router exchanges routes with its peers. When you connected to a NET/ROM node you got a prompt, and from that prompt you could connect to any node in the routing table without specifying the path. The node software figured it out.

The classic packet-user experience — connect to your local node, then connect from there to a node three hops away, then from there to a BBS — was NET/ROM at work. The user only had to know two things: their local node's callsign, and the alias of wherever they wanted to end up. The intermediate hops happened automatically.

AXIP — AX.25 over IP

By the 2000s, two things were obviously true. First, the public internet had become reliable and cheap. Second, a lot of the long-haul RF backbone links between BBSes had degraded or gone away as tower sites were lost or operators retired. AXIP — AX.25 over IP — was the practical response.

An AXIP link is, mechanically, an AX.25 frame encapsulated inside a UDP or IP packet and sent over the public internet between two BBS endpoints. Each end of the link still presents native AX.25 to the local RF user. From the user's point of view nothing has changed: they connect to their local BBS, post a message addressed to a callsign on a system across the continent, and the message arrives. What changed is that one of the hops in the middle, instead of being a 1200-baud RF link with marginal propagation, is a few-millisecond IP hop between two Linux boxes.

This is exactly the trick that VE3CON's BBS adopted when its forwarding peer list grew to include systems in Washington state, California, Nova Scotia, and BC. None of those links were ever going to work on RF from Toronto. AXIP made them work over IP without changing the user-facing protocol. It is the same architectural pattern that community broadcasters now use when they keep an FM transmitter on the air and run the long-haul programme feed over the internet.

A short note on the layering

Reading the four pieces against each other, the layering is straightforward once you see it. AX.25 is the link layer — the format of the actual frames moving over the air. NET/ROM is a network layer on top of AX.25, doing routing between nodes so that users do not have to specify paths by hand. AXIP is a tunnelling layer that encapsulates AX.25 frames inside IP packets so that two endpoints can exchange them over the public internet without RF in between. BBS and APRS are both application layers — services that users actually interact with — sitting on top of whatever combination of AX.25, NET/ROM, and AXIP is needed to get the frames where they need to go.

This is the same kind of stack any modern networked service uses. The names are different, the wire speeds are vastly different, but the conceptual separation between addressing, routing, transport, and application is the same. That is part of why packet is still a useful teaching network: it is small enough to hold all four layers in your head at once, and the failure modes are visible — you can hear them on the radio.

How the four fit together

A working operator in the GTA in 2015 might have done the following in a single evening. Put a position beacon on the air via APRS through VE3YAP, so that a friend could see them parked at home. Connected via the local NET/ROM node to VE3CON, posted a personal message to a callsign in Hamilton, which would be forwarded over an RF link to VE3MCH. Read a bulletin in the TCPIP topic that had originated on a BBS in California and arrived on VE3CON via an AXIP forwarding link. None of those four operations looked the same. All of them used AX.25 frames at the bottom of the stack.

If you want the higher-level history of how these pieces fit into the operating culture of SOPRA, the SOPRA history page covers it. If you want the snapshot of which nodes were on which frequencies and where, see the node archive. For background on AX.25 itself, the Tucson Amateur Packet Radio archives are still the best public record of the protocol's development.